The recent demonstrations of hacks on everything that moves suggests that there is a vast market opportunity for those who can uncover exploitable security holes.

The criminally-minded may use these discovered vulnerabilities for a quick payday, offering the findings to the highest bidder on the darknet market.

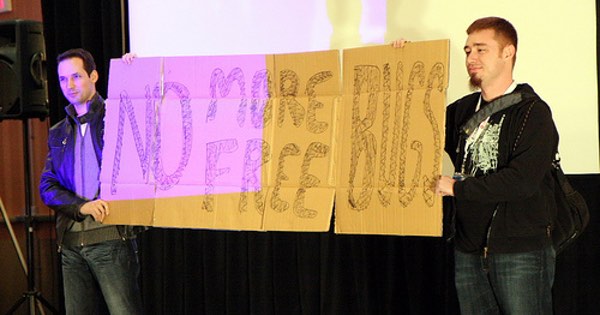

Ethical researchers prefer to report the findings to the manufacturers for remediation and possibly a reward. This reward, known as a bug bounty, has become the topic of discussion, and even one serious study.

The question that comes to mind is, do bug bounties work?

The main challenge in the industry is that there is a dramatic lack of agreement about whether or not to reward those who discover these weaknesses.

In some cases, even the most “hacker-friendly” organizations have acted in an undecided manner about whom they chose to reward.

Facebook, for instance, has been known to reward some researchers, while punishing others.

Some other firms want no part in bug bounty programs at all, evidenced dramatically by the recent public disclosure of vulnerabilities in OS X, where the security researcher declared he had no interest in informing Apple in advance.

And just this month there was the odd, now infamous statement by Oracle CSO Mary Anne Davidson that railed against customers looking for bugs in the company’s software. The bizarre post was quickly removed from Oracle’s blog (but retained for posterity in web archives).

And just this month there was the odd, now infamous statement by Oracle CSO Mary Anne Davidson that railed against customers looking for bugs in the company’s software. The bizarre post was quickly removed from Oracle’s blog (but retained for posterity in web archives).

Most corporations that offer bug bounties are clear about the stipulations for testing and reporting prior to offering the reward. This is where some researchers run afoul of the corporate and legal rules.

While the Computer Fraud and Abuse act may be broadly and incorrectly applied in some circumstances, a reward for reckless behavior in the name of bounty hunting would send the entire wrong message.

For example, encouraging someone to remotely control a plane filled with passengers would not be the best business decision for an airline.

Why do some researchers work within the framework of the bounty rules, and others choose a more cavalier approach? This is the question that makes one wonder if bug bounty programs are effective.

Fortunately, we may one day know the answer. Earlier this year, security industry analyst Keren Elazari pondered that thought on Paul’s Security Weekly webcast, and is now working towards conducting research into answering the question.

It will be interesting to see the results of Keren’s work. More importantly, if the bug bounty programs are working, it will be interesting to see if this has a positive effect towards maturing the security community. That may require another study.

What do you think? Do bug bounties work? Take our quick poll, and leave a comment below.

[polldaddy poll=”9043494″]

No system that involves people is ever going to work flawlessly, however the idea behind bug bounties I feel is solid. What we need to see are more companies giving bigger bounties for the problems uncovered, instead of the morsels that many continue to offer up. You can't address a balancing problem if you're unwilling to add weight to your side of the equation.

Personally I would prefer it. It would give me more confidence in buying online.

Considering I keep hearing the news that another firm has had its website hacked makes me wonder if its worth the risk of buying from certain companies..

There is no binary answer. Assume a corporation is honourable and rewards everyone properly. That's fine for those who do participate. But not every researcher will find that model as appealing. I do not refer to those who abuse the flaw for personal gain so much as those who do full disclosure. It is unfortunate but some vendors don't care about flaws (the example Oracle doesn't surprise me but they aren't the only example – administrators are also guilty[1]) in a positive light; that is, they don't acknowledge the flaws or if they do, they don't try to solve it, and sometimes releasing an exploit is the only way to get something fixed (with the unfortunate fact that some might abuse it – but they might have been abusing it already; you can't be positive).

The problem is this: different vendors act differently (as expected for better or worse) and some researchers act differently. There are numerous reasons for it but it all comes down to how each party has developed as a person (or vendor and/or researcher) and how they prefer to work with others.

SUMMARY: bug bounties work in some cases but not in others. This means you cannot say they work or they do not work; they have uses but a corporation offering bug bounties will not ever prevent all researchers from publicising a flaw their own way (and a researcher might be biased against one corporation over another). Offering incentives to help certainly isn't a bad idea but it isn't an issue of it works or it doesn't work.

[1] The Ramen worm that attacked Red Hat Linux some years back for example. It was more like a collection of utilities that exploited a variety of flaws in different services (some of which have a notoriously bad reputation for many, many years [unless I'm remembering which services it attacked, incorrectly]). Thing is, the bugs had patches readily available for some time. But the administrators were negligent and so they were hit by this worm (the worm did plug the holes though it also defaced websites in a very obvious way). The fact is some do not care about security; the Ramen worm demonstrated this quite well (and fixing flaws that allowed breaches isn't by any means a new concept). Vendors and administrators are equally guilty in this problem (not every vendor and not every administrator but some).

IMHO bug bounty programs (like many things we do in information security) are phrenology/cranioscopy – they provide a sense of a scientific approach but they only touch the surface. Unless you can investigate the source code, do design and configuration analysis what you end up with is a false sense of your state.

"Unless you can investigate the source code"

Correction: unless you write the source code yourself (and there are some technicalities that make this even more complex). If you're curious about why this is, look up Ken Thompson's article 'Reflections on Trusting Trust'.