Tencent is the latest Chinese software company developing an anti-virus product to have been censured by independent testing agencies in less than a week.

Tencent is the latest Chinese software company developing an anti-virus product to have been censured by independent testing agencies in less than a week.

Last week, Qihoo had its knuckles rapped and certifications removed by AV-Test.org, AV-Comparatives and Virus Bulletin, after being found cheating in recent tests to falsify its detection capability.

Now the testing bodies have named Tencent as guilty of gaming tests to falsify its abilities in performance tests. Worse still, according to the testers, the tweaks made for the testers could actually make the product’s real-life detection rates *worse*.

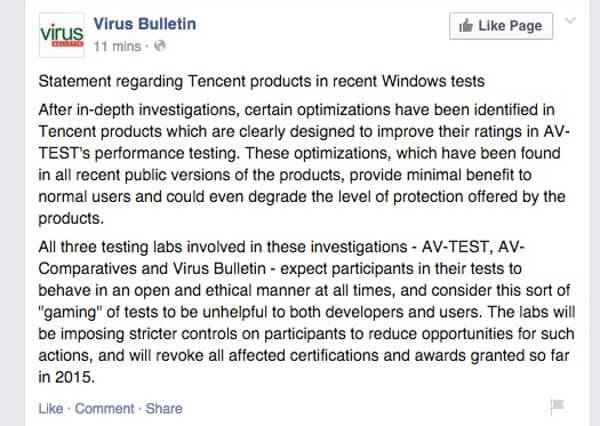

Here is the announcement that Virus Bulletin has posted on Facebook:

After in-depth investigations, certain optimizations have been identified in Tencent products which are clearly designed to improve their ratings in AV-TEST’s performance testing. These optimizations, which have been found in all recent public versions of the products, provide minimal benefit to normal users and could even degrade the level of protection offered by the products.

All three testing labs involved in these investigations – AV-TEST, AV-Comparatives and Virus Bulletin – expect participants in their tests to behave in an open and ethical manner at all times, and consider this sort of “gaming” of tests to be unhelpful to both developers and users. The labs will be imposing stricter controls on participants to reduce opportunities for such actions, and will revoke all affected certifications and awards granted so far in 2015.

It seems to me that Windows users have enough to worry about with malware, without also worrying that vendors are fiddling security products in such a way that detection rates are weakened, but to make them look faster in independent tests.

I notice that the tester isn't claiming that the AV vendor gave a different version of the product to the tester, to what is given to the public. That would indeed be naughty. But they're not being accused of that.

They don't give any details about what the AV vendor did. Until they do, I'd be inclined to look at the possibility that the test protocol is inadequate. Some transparency in this would be nice!

If a test protocol faithfully duplicates real-world usage (as you'd expect), then it wouldn't actually possible for an AV vendor to "cheat".

Of course, you can't expect the tester to announce "We've discovered a flaw in our test protocol". Obviously, they'd rather say "someone is gaming the test".

And as someone who was involved in developing AV software 25 years ago, and who saw some truly awful test protocols, I'm inclined to suspect the test protocol.

I agree that more information about precisely what was discovered would be good.

There are perhaps some clues in an earlier statement from AV-Test.org (see https://grahamcluley.com/revealed-anti-virus-product-cheat/ ):

"We have now started to evaluate the possible manipulation of our performance testing. We have found strong evidence that another company, not Qihoo, is optimizing their product to do well in our performance test by excluding certain files and processes from checking. This is based on filenames and process names and can pose a security risk as well! We will check with AV-Comparatives and VB100 to verify our findings and will let you know as soon as we have the final data."

Of course, now we know what VB/AVC/AVT concluded.

I'd still like more transparency. If the AV is "optimizing" [sic] by excluding certain files and processes, then that could be bad, or it could be good. For example, if you've done a cryptographic checksum of a file and have also verified that it's uninfected, then next time, you don't need to check the file for viruses, you only need to check the checksum is unchanged. Ditto processes.

And if the testing protocol uses a fixed set of files/filenames/processes, then that's a protocol weakness, which could easily be fixed at the cost of a small amount of work and time. Renaming files isn't difficult.

So, given the lack of transparency from the testing people, we can't tell whether the wrongful optimizing [sic] is being done by the AV vendor (as the tester claims) or by the AV tester (who would have us believe that they have a good testing protocol, but if they've published their test protocol, I don't know where to read it).

Also, I'd like to hear from the accused on this – so far, I've only heard one side of this, and in my experience, it's usually a good idea to hear both sides of a case.

I could give you a fair few examples of badly flawed test protocols that I've met in the past. This is from 25 years ago, and I'd like to think that things have improved, but I'd rather not make unfounded assumptions on this.

How many people are testing AV test protocols? And if the answer is "none", what's the incentive to AV testers to get it right?

So, is VB/AVC/AVT willing to be transparent on this? And could we get a response from Qihoo?

Regarding Qihoo they have issued a statement: http://www.prnewswire.com/news-releases/qihoo-360-statement-regarding-cheating-in-lab-test-300076309.html

They have also, separately, denied that they offer their "employee of the year" a night with a Japanese porn star: http://uk.businessinsider.com/qihoo-360-awards-night-with-porn-star-julia-kyoka-to-employee-2015-2

Hi drsolly,

If you carefully read through the article and the author's reply to you I think you will see that the answers to your questions were already provided.

a) “After in-depth investigations, certain optimizations have been identified in Tencent products which are clearly designed to improve their ratings in AV-TEST's performance testing. These optimizations, which have been found in all recent public versions of the products, provide minimal benefit to normal users and could even degrade the level of protection offered by the products.”

“These optimizations … provide minimal benefit to normal users and could even degrade the level of protection offered by the products.”

I interpret this statement to mean that by skipping files the product doesn't speed up a computer very much, and that excluding files from scans places the user at greater risk.

b) “We have found strong evidence that another company, not Qihoo, is optimizing their product to do well in our performance test by excluding certain files and processes from checking. This is based on filenames and process names and can pose a security risk as well!”

“… another company … is optimizing their product to do well in our performance test by excluding certain files and processes from checking. This is based on filenames and process names and can pose a security risk as well!”

If Tencent were excluding certain files from being scanned after first verifying them as clean by crytographic checksum (as other security products do) then there would be no problem. This statement indicates that Tencent is not excluding files which have been verified by cryptographic checksum. They are skipping files simply because they have the correct name.

By the way, the article is about Tencent but to answer your question regarding Qihoo, they have released at least one statement.

http://blog.360totalsecurity.com/en/qihoo-360-statement-regarding-cheating-in-lab-test/

There is also a second, translated statement supposedly from Qihoo regarding their apparent withdrawal from future AV-Comparatives tests. The link was posted on Wilders security forum. I am not vouching for its authenticity.

http://www.hihuadu.com/2015/05/02/360-withdrew-av-c-reviews-traditional-anti-virus-evaluation-criteria-behind-20812.html

Hi,

Aside from gaming the testing,the Chinese AV often put out dodgy advertising,like Qihoo's banner ads that say: "PHONE IS INFECTED?

"scan & clean the viruses now!"

Or Cheeta mobile’s clean master app,

Clean virus and trash on your phone,SCAN NOW!

And that's just two of them,for which I reported Qihoo to Google AdWords as they boarder on scareware. I also believe that they are responsible for some of those pop-up scareware ads I've seen in the past. Cheeta mobile had their apps pulled from playstore for a short while a year or so ago for something to do with advertising I think. You can read about it here at : https://nakedsecurity.sophos.com/2014/04/04/google-takes-aim-at-deceptive-advertising-of-play-store-apps/

This all points to a weakness in the testing protocol. Randomising the filenames before doing a static scan would add just a few seconds to the test, and the code to do it would take just a few minutes to write. Failure to do this is a test protocol weakness.

The testers should implement this immediately, if they think that at least one, and maybe two companies are relying on their file names. Because if two can do it, so can many others.

And I would propose forming an organisation to examine and rate test protocols, because as far as I can see, A) there isn't one and B) without such examination, testers can get away with blaming products for their own deficiencies. Perhaps Graham Cluley, as an independent, could found an organisation to examine and certify test protocols?

Examples, taken from my experience (which is, of course, 25 years out of date).

1) Test suites that contain many files which are not malware (the most egregious example was a two byte file).

2) A test which ran one product; the tester didn't realise that running that product in the way that he did, cleaned (or attempted to clean) all the infected files. Little wonder that the next product he tested on those cleaned files, found very few viruses and was rated as very poor.

3) A test suite that consisted *entirely* of files that were not viruses. The tester assumed that they were.

4) A test that used a collection of files; some were viruses and some were not. The tester assumed that any file that any product flagged as infected, was a virus. Naturally, the product with the worst false alarm rate, won the test.

I would hope and pray that things are better these days. But I wouldn't rely on it, expecially given the fixed-filename flaw that this discussion has revealed in the VB/AVC/AVT protocol. I wonder what other flaws there are?

OK, googling around, I'm seeing reports that the problem was that the Qihoo product that they provided for testing used the Bitdefender engine, whereas the product they ship to consumers uses their own QVM engine.

How is this possible? Qihoo offer a free antivirus for PC (for example). Any sensible test protocol would start off by downloading the latest version from the publicly available source. So why on earth did the VB/AVC/AVT protocol start off by accepting the product from the vendor? That is begging for trouble, it's trusting the vendor, and the whole point of testing a product, is that you don't trust the vendor. You might as well just ask the vendor "What score would you like?" and printing that.

And if a vendor doesn't offer a free version, then there's still no excuse for getting product from the vendor, because you *cannot* know that you're getting what the consumer would get. In that situation, you have to *purchase* the product; that way, you're getting genuine product.

Hi drsolly,

The problem is not with the testing agencies or with the protocols. All of the security companies who have submitted their products for testing have agreed to the protocols in advance. Is there room for improvement in the test protocols? Possibly, but that is a subject for a different discussion. Tencent agreed to the protocols and then tried to cheat in order to get a higher score.

The testing agencies did nothing wrong. This is not a problem which can be solved by randomizing the scan order in a test, or by not using a “fixed list” of virus samples. In an effort to make its product appear to scan more quickly than its competitors, Tencent was apparently excluding files from scanning which had names of common, well-known files. In other words, they were not checking certain files for signs of infection. Their product had been programmed to automatically label certain files as being clean based only on the name of the file.

For example, they might have been skipping over files with the same name as Windows system files. Such files are safe if they are genuine but the file names can easily be spoofed by malware. If for example, Tencent programmed their scanner to skip over a file named iexplore.exe because it has the same name as the well-known file Internet Explorer, a nasty virus named iexplore.exe would be free to do anything it desired on a PC which was “protected” by Tencent. This practice by Tencent was not only extremely amateurish, they also placed a lot of their users PCs at risk.

"All of the security companies who have submitted their products for testing have agreed to the protocols in advance."

That's the last thing you want! The test protocol must be robust, but need not be agreed to by the AV companies.

" Is there room for improvement in the test protocols? Possibly, but that is a subject for a different discussion."

Uh, no. My point is that perhaps the problem here isn't cheating by AV vendors, it's inadequacy of test protocol.

"This is not a problem which can be solved by randomizing the scan order in a test"

That's not what I'm suggesting. I'm suggesting that the filenames be randomised. So, for example, iexplore.exe would be renamed to froghifn.exe; all files would be renamed to something, and that something would be different for each test. So it wouldn't actually be possible to "game" the system in the way that it's been suggested that it has. Such a rename would need just a few seconds to do, and maybe an hour to write the code (a once only task) to do it.

Important principle. When you're doing a security action, you have to assume that the attackers are capable of being twisted and devious, not straight and honest (even if you think they are). And testing AV products is a security action.

The more this discussion continues, the more I think that this is a failure of the test protocol, being reframed by the testers as "the AV company cheated". And if the testers don't realise that it's a failure of their test protocol, they won't improve it.

Because if the test protocol has this simple flaw, it probably has others that haven't been noticed yet by the testers, although they might have been noticed and exploited by the AV companies, who are perhaps not all as honest and upright as the companies were 25 years ago.

Hi drsolly,

You wrote:

"Any sensible test protocol would start off by downloading the latest version from the publicly available source."

According to the owner of Av-Comparatives, they obtain their test subject security applications exactly in the manner you suggested.

http://www.wilderssecurity.com/threads/investigation-in-progress-by-av-comparatives.375599/page-4#post-2486271

" "submitted" does not mean "send"; it only mean they tell e.g. which version to use (e.g. free, AV, IS, TS, etc.) the products are downloaded from official websites like you guys do."

Hi drsolly,

A group is attempting to create testing standards as you suggested. The group is controversial but is, I hope, a step in the right direction.

Anti-Malware Testing Standards Organization

http://www.amtso.org/

AMTSO has posted a statement about the recent controversy here: http://www.amtso.org/PR20150506

If they wrre downloading it from the website they would have the same version as everyone else. Clearly they are having them submitted which of course would allow companies to cheat.

A better question would be if these labs are top in the field how did they fail to find something amiss. Qihoo did 2 things turned the QVM2 engine off and enabled the Bitdefender engine. It ships with all of them and its super clear when something is on or off. This tells me the testing protocol is flawed.

If they really wanted to score high Qihoo would enable all their engines for testing and for users. Its not done though because this uses more computer power and storage than some users could handle.

As for Tencent they truly cheated by modifying what files are being scanned however it does not mean the product doesn't protect your computer which this whole thing gives off.

The testing is flawed, I don't think Qihoo truly cheated and Tencent you cheated but your product has a high protection score from personal tests so well done.

www.Amtso.org, posting dated 6 May 2015

How? By e.g. "submitting a different product for review than what was actually offered their users" or by "having optimizations in the product only to perform better in a performance test".

So, amtso seems to be saying that the product wasn't just downloaded from the place the consumers would download from, they were given a different product version. TEST PROTOCOL FAIL.

And that there were "optimizations" that only work in a performance test. TEST PROTOCOL FAIL.

As amtso says, "bad testing harms us all".

The answer, I suggest, is "better testing", not just "blame the vendors". As amtso says, "This situation is not unlike someone buying a car based on a review highlighting its great NCAP rating for safety, only to find that the model purchased does not even include an airbag." Well, the test protocol should include "check that the car has an airbag", not "ask the car maker if the car has an airbag".

So…..who do we trust? Who is the best anti-virus and anti-malware provider? What about PC-Matic?