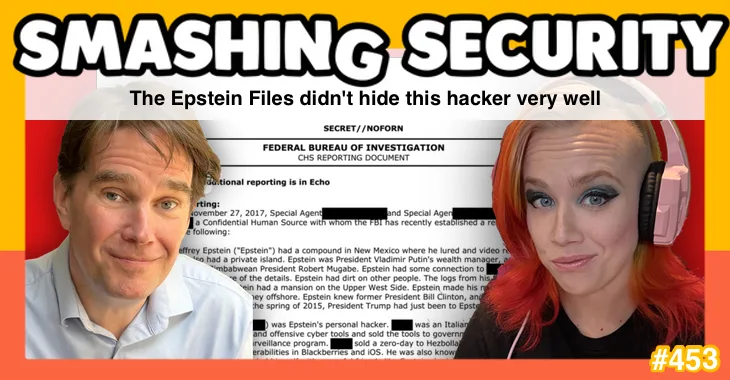

Supposedly redacted Jeffrey Epstein files can still reveal exactly who they’re talking about – especially when AI, LinkedIn, and a few biographical breadcrumbs do the heavy lifting.

Sloppy redaction leads to explosive claims, and difficult reputational consequences for cybersecurity vendors, and we learn how trust – once cracked – can be almost impossible to fully restore.

Elsewhere, the spotlight turns to insider threat in the age of AI, after a senior US cybersecurity official uploads sensitive government material into the public version of ChatGPT. Oops.

All this, and much more, in episode 453 of Smashing Security with cybersecurity veteran and keynote speaker Graham Cluley and special guest Tricia Howard.

Show full transcript ▼

This transcript was generated automatically, probably contains mistakes, and has not been manually verified.

This transcript was generated automatically, probably contains mistakes, and has not been manually verified.

Obviously people are going to click on things, so it's— yeah, anyway.

Hello, hello, and welcome to Smashing Security episode 453. My name's Graham Cluley.

And I've dabbled any and everywhere. So where my heart lies is in the technical malware analysis, your zero-day stuff, the really fun.

And I try and make those stories sound very interesting and creative and musical most of the time.

And people can bring so many capabilities and unique talents because of that. It's good that we're not getting everyone from the same pool, isn't it?

Listen, let me tell you, there is no stress like hopping on stage when you have a critical prop that is missing somehow, and now you have to make it out in front of hundreds of people who are expecting you to be perfect.

By the way, we are made for this. We deal with failure all the time. We are made for cybersecurity.

This week on Smashing Security, we're not going to be talking about how Notepad++ was hijacked by state-sponsored hackers for months, pushing targeted users to malicious updates.

You'll hear no discussion of how an app designed to help you quit watching porn was found to be leaking intimate details about users' sexual urges.

And we won't even mention how cybersecurity firm Microworld Technologies, the makers of E-Scan antivirus, was hacked to push out malware to its customers.

So Tricia, what are you going to be talking about this week?

All this and much more coming up on this episode of Smashing Security. Well, we've got time right now to hear from one of our sponsors, Passwork.

If you work in cybersecurity, you already know this. Most secrets don't get stolen, they leak.

Passwords pasted into chat tools, shared admin accounts, those spreadsheets that everyone pretends don't exist. Passwork is built to stop that.

It's a password manager and secrets management platform designed for organizations that want on-premise deployment, meaning your sensitive data stays on your own infrastructure under your control.

That matters if you're dealing with regulatory requirements, data sovereignty, or simply don't want your most critical secrets living in someone else's cloud.

From a security perspective, Passwork uses a zero-knowledge architecture with strong, openly documented encryption, and its design is regularly tested by independent security researchers.

Operationally, it's built for real teams, role-based access control, integration with existing identity systems, support for MFA, highly available architecture.

Designed to keep things running when parts of your environment fail.

And unlike those tools that look cheap until you start paying for them in time and stress, Passwork focuses on long-term stability, a public development roadmap, and a lower total cost of ownership.

Passwork, it's not just a password management platform, it's a secure, adaptable secrets manager built to meet your business needs.

To find out more, go to smashingsecurity.com/passwork. Passwork. That's smashingsecurity.com/passwork.

Now, Tricia, you know that when governments redact documents, they're gonna have a reason for it, aren't they?

It can be for legitimate, justifiable reasons that they don't want certain information getting out into the public domain.

It may be that they're defending people, they're protecting people's privacy. I mean, that's the whole point.

You know, you get your black marker, you cross out the sensitive bits, and the public gets to see everything else. But here is the thing with redaction.

It's not just a case of what you remove from view.

You've also got to think about what you leave behind, what you leave in the document which didn't get a great big splat of black marker all over it.

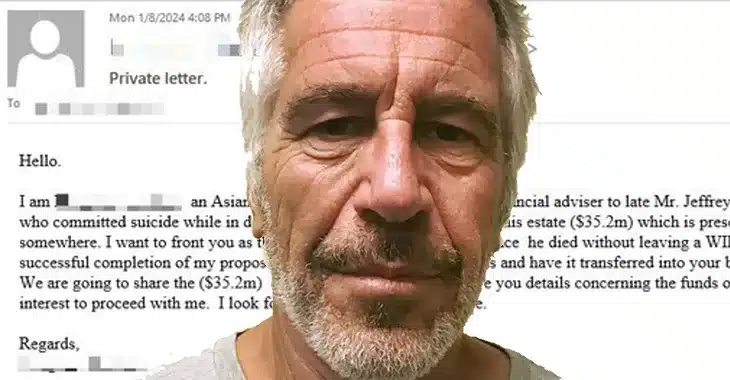

And this is in my mind right now because, of course, we've just seen the US Department of Justice, they've released another massive batch of files related to Jeffrey Epstein.

And we're talking millions of pages. Have you been following this at all?

I actually came across earlier today, there is an initiative to make it much easier to peruse. I found a website called jmail.world.

And what happens at jmail.world is they've completely replicated the Google Gmail experience. You are now in Jeffrey Epstein's Gmail account. So they've uploaded all of the— What?

Yep. All of the emails, all of the images, they have uploaded his flight plans, his Google Drive, and you can see everything through the Gmail platform.

So you can search just as easily as you can in Gmail. You can look in his sent folder.

You can look for the names of certain public individuals, and it will show you all of their emails. Wow.

But we're talking millions of pages, and one of the documents in there has caused some ripples in recent days in the cybersecurity industry, because buried in the Epstein files is a document from 2017 when an unnamed FBI informant made a claim.

They claimed that Epstein had his own personal hacker.

Now, I don't know whether to be surprised by that or not, because I think a lot of people with a lot of money may well have a legitimate reason for hiring a hacker.

Can I prevent myself from being hacked?

Maybe Jeffrey Epstein was acquainting himself with people who might have the methods for hacking into his organisation and reading his emails, and he may be slightly nervous about that.

You can easily understand.

And the name of the alleged hacker, as well as the informant, has been blacked out. It's hidden from view.

But the document goes on to tell us various pieces of information about this alleged hacker who it claimed was in contact with Jeffrey Epstein. It says he's an Italian citizen.

It says where he was born in Italy. It tells us that he was known for finding zero-day vulnerabilities in iOS and BlackBerry and Firefox.

It tells us even that he sold his company to a major cybersecurity firm, which they name in the document. It says he sold his company to them in 2017.

It tells us he became a vice president at that company following the acquisition.

So how long do you imagine it takes people to work out who this redacted individual, this alleged hacker, actually is?

It's like, instead of just saying what it is, it's like, listen, we're gonna dangle these carrots around, knowing the community that they're talking to, by the way, which is made up of a bunch of us who literally put the carrots together.

I mean, this is petty. It was petty.

But the truth is, I think this was done through incompetence. I think that—

And judging by online discussions I've seen, everyone else pretty much figured it out just as quickly.

They put it into an AI and it just spat out the answer immediately back to them. So, you didn't have to be Sherlock Holmes. This wasn't sophisticated detective work.

This is just the US Department of Justice redacting a name while leaving in what amounted to a biographical fingerprint of somebody.

It's if I said to you, look, I'm not gonna tell you the name, right? There's a famous physicist. He's got wild white hair. He looks a bit crazy.

He stuck his tongue out in photographs, came up with E equals MC squared. You know, you know who I'm talking about.

Or if I say, look, I'm not gonna name the current US president, but he's a bit orange-hued. He's a reality TV host. He lives in Florida a lot.

Loves to big up his achievements and inflate the number of wars that he's fixed. You know, you're not really hiding anything at all if you do something like that, are you?

Being careful about putting out these little breadcrumbs in our digital presences, literally to avoid what happened.

I find it just so funny that it was so I guess in my mind, the reason I thought it was deliberate, and I'm not entirely serious about that, this is more for humor's sake, but it's just because it is so wild to me.

Some things could be a little bit more, a little bit more generalized, but you don't get any more specific than saying, hey, we sold this company and they became a VP of the company.

I mean, what? Anyone who has LinkedIn could figure this out.

And I want to be really clear, this document contains allegations from a confidential FBI informant. They're not proven facts. This is just one source making claims.

We have no way of knowing how reliable that source was.

But what is clear, if you look up places like Gmail.world or DOJ's website, Epstein and this particular individual were in contact.

There were emails, albeit fairly mundane communications, you know, arranging meetings and things.

But where it gets interesting, I think, for the cybersecurity world is that the informant's claims, if you take them at face value, they paint quite an alarming picture.

So they allege that this alleged hacker developed zero-day exploits, as we know, sold them to multiple governments, including the United States and the UK, and also allegedly to Hezbollah as well.

Apparently, it was paid for with a trunk full of cash and that he helped establish a Middle Eastern government's cyber surveillance program as well.

Again, these are unverified claims from a single source. We don't know if any of this is true.

But if we look through the implications here, what about this company that acquired this guy's startup back in 2017? They are one of the biggest names in enterprise cybersecurity.

Their software runs on so many hundreds of thousands of endpoints across countless major organizations worldwide.

Their technology is trusted to protect sensitive systems, critical data all over the planet, right?

Now imagine, you are a CISO at a Fortune 500 company who's a client of that particular well-known cybersecurity firm, which it appears hired this guy that the allegations were made about by this informant.

And you are reading these headlines over your morning coffee. What's going through your mind? What would be going through your mind at that point?

I mean, 'cause when you're thinking, oh man, that's such a different level of threat, I think, too.

It's anytime you're dealing with anything governmental, I think the impact is just so much stronger. And as in the private sector, oh man, I don't know.

Well, I honestly, I would just be speechless.

Your board might ask you, hang on, don't we use that company's software?

Your security team's going to ask, someone's going to want to know if there's any possibility, however remote, that there could be vulnerabilities or backdoors in the software that your firm is now running.

And what are you going to tell them? You don't know. All you can do is say, well, you know, it's probably all right. But you haven't got total confidence there.

And this is the thing when it comes to trust, when it comes to cybersecurity, it's incredibly hard to build trust, but it's so, so easy to damage and fracture trust.

And so this humongous security vendor who hired this guy, who bought his technology, bought his company, they haven't commented on any of this as far as I'm aware.

And honestly, what are they gonna say? They're gonna say, oh, we vetted him when we acquired his company and we're confident he didn't leave any backdoors behind when he left.

And, you know, hopefully that's right, but some people are still going to be nervous. And that guy meanwhile set up his own identity security company. What do their customers think?

If they have even the teeniest, tiniest suspicion that their CEO might have engaged in some shady practices in the past, it makes you nervous, doesn't it?

You may think it's a safer thing to do to go with someone else for which there aren't any people spreading rumors about.

Anytime we talk about a major incident, brand impact, the social impact very closely follows, and this is a terrible example of that.

I mean, because now not only do you have the potential technical issues, but now you have the social implications of this as well.

So we've seen this with some other vendors who have made decisions to keep certain hate speech things alive on their platforms, et cetera.

And the way that the community and the rest of the world responds to that.

So now not only are you having to justify that you need this product in the first place, because often security is trying to fight for budget anyway.

Now you have this solution and they're, yo man, we can't have anything that comes close to touching the Epstein thing, obviously. So yeah, that's just a whole other thing.

This whole gray market where researchers are finding unpatched vulnerabilities and selling them to governments, and some people will be happy to sell them to Western governments, some people won't be happy about the idea of being sold to Western governments.

Some people will say, selling to Israeli intelligence is fine. Others will say, no, it's not. Some will say selling to Hezbollah is fine. They're unacceptable customers.

Others will say, no, they're not. But what kicked all of this off is this redaction failure.

And, you know, you can black out names all you want, but if you leave in nationalities and regions of birth and vulnerabilities they were known for researching or the names of the companies they acquired, you know, all this kind of information, you haven't redacted anything meaningful at all.

And I think what we all have to do now, just you were saying, you know, when we post up on Facebook about our kids are going to school or, you know, when our birthdays and things like that, and we hopefully are moderating ourselves and thinking, oh, could these all be little breadcrumbs which could reveal more about me than I wanted?

Organizations need to think about this too, because in this age of Google, and LinkedIn and AI, a collection of specific biographical facts is an identity.

It is a way of identifying somebody. And it appears the Department of Justice didn't learn that. So it's not a technical failure.

The redaction itself, that was properly implemented on this occasion. There have been other times when they failed to properly black out text.

And I said, you know, I think as organizations, we all need to think about what a good redaction process is today, because how many supposedly anonymized documents are out there right now with the same problem, names removed, but identities completely obvious to anyone who spends 2 minutes thinking about it or asking an AI to help them think about it.

Yeah, it's probably more common these days.

Now, if you've ever worked in IT and especially networking, you'll know when the network's working, nobody notices. When it isn't, everybody notices.

The problem is that most business networks are a mess of different providers, tools, dashboards, contracts, and crossed fingers.

And somehow, despite all that complexity, they're expected to be fast, secure, reliable, and magically fix themselves. And that's where Meter comes in.

Meter builds networks from the ground up. They deliver a complete full-stack networking solution— wired, wireless, and cellular— all as one integrated service.

And this is genuinely full-stack. Meter designs the hardware, writes the firmware, builds the software, manages the deployment, and runs the support.

They even take care of things ISP procurement, routing, switching, firewalls, VPNs, DNS security, SD-WAN, and multi-site networking.

In other words, fewer vendors, fewer dashboards, fewer "who owns this problem" conversations, and far fewer late-night panic attacks.

Meter's approach is about real control, proper visibility, and networks that behave themselves.

And for IT leadership, it means something almost mythical in networking: predictability. If you're responsible for keeping the business online, you really should check out Meter.

So go to meter.com/smashing to book a demo now. That's M-E-T-E-R dot com slash smashing. And thanks to Meter for supporting the show. Tricia, what have you got for us this week?

So last time I was on, you talked about some amazing research that Anthropic had come out with and showed that a lot of the LLMs are actually super chill with blackmailing and ordering murder as long as they stay intact.

And so I thought it might be kind of funny to follow up with that with another story that came out very recently, which is one of those that is so funny because if you don't laugh, you're gonna cry sort of situations.

'Cause the universe really committed to the bit on this one.

The head of CISA, that's the Cybersecurity Infrastructure, you know, the government thing, uploaded sensitive government documents into the public version of ChatGPT.

So I wanna take a second here.

If you're not familiar with how LLMs work and all that stuff, when you get in with OpenAI or Anthropic, et cetera, you have the potential to create your own hosted version of it.

Anything that goes through it stays internal. It doesn't actually get used in any of the learning models. It's truly your own thing. That's not what we're talking about.

We're talking about the one that everybody uses, the one that you use to make sure that your emails aren't too aggressive or ask how to explain existentialism like you're five.

So these documents, I wanna point out too, we were talking about redaction and how do you decide what needs to be put out there and whatever.

We have classifications in place for this exact thing. And this one was called for official use only.

And last time I checked, putting it into the public ChatGPT is not exactly official use.

But I don't know, maybe I'm old fashioned. So here's why I find this story so freaking fascinating.

Because as we get new technologies in, AI and all these other wonderful digital connected things, the easier it is for bad things to happen with significantly less technical barrier to entry.

So depending on what was in the documents, right, this could have been a potential massive breach that happened by the government head.

It's so interesting to me that as technologists, as security people, we love to focus on the technical problem, right?

And what is amazing to me about that is that every technical problem was still created by a human.

Even everything that comes out of ChatGPT, that was built off of a ton of human interactions that are now collated, right? So arguably every threat is a human threat.

And especially when you're dealing with stuff like this, what's even scarier is they actually did have tools and things in place for DLP and all that stuff, which did fire.

It did alert, right? And nobody caught it even still. Not until after it had already happened. Yay.

So this actually happened last summer, 2025, and they're only just now coming out with what has happened. Yeah, big scary, huh?

Until fairly recently, it was Jen Easterly who was in charge of CISA, who I think was fairly beloved by the cybersecurity community and she was fired by the Trump administration and she's now heading up the RSA conference.

And I even heard that the US government isn't going to be sending officials from CISA to the RSA conference, which for anyone who isn't aware, is probably the biggest cybersecurity conference.

It's the biggest collection of minds and knowledge and the industry in the entirety of the United States, if not the world.

It's one of the biggest cybersecurity events in the world. So they're boycotting it because the former head of CISA is now in charge of it, which seems again rather short-sighted.

I can't imagine that Jen Easterly would have been uploading these documents to ChatGPT.

Yeah, we created the internet and told people to click on things, and then we created security and said don't click on things. What? Obviously people are going to click on things.

So it's— anyway, I am a huge defender of users in a lot of ways because it is often an accident.

I don't necessarily think this was intentional, obviously, but that's a big accident. And I think when we're talking about the governmental level, and this was the head, right?

So that's the other issue. It's not as if this was an Smashing Security, who did this. So this was the head of the organization. Who answers to that? Who is there?

What is the fallback mechanism for whenever the person who is the leader is the one actually making the mistake?

It's a very interesting issue, and it's going to get even worse the more that we integrate these AI tools, because again, the easier that something is to use for a non-technical person, the easier threats can happen because you're just allowing people opportunities to upload very sensitive information, even things that may not seem sensitive.

To you, it might not be, but to your competitor, that might be exactly what you need. So it's, oh, it's just such an interesting time that we are in.

I've always talked about the human threat in security. I think it's huge. I don't think that we focus on it enough. Even though we talk about it a lot, we don't do it right.

And this is another example of how this can happen in a very real scenario. Government documents were now at risk. Yay.

And it suggests that normally the Department of Homeland Security does have a policy of blocking access to AI tools. It doesn't allow people normally to upload these kind of things.

And it sounds like the interim head, they actually were given permission.

They requested permission to use it, were given the permission and then the alarm bells went off and it's like, whoa, no, it's the age-old story of the bosses think, well, those rules don't apply to me.

Yeah.

So I guess, you know, why would this be any different?

I never ever say anything bad that, but you've gone ahead and you've upset a percentage of our, to be honest, most of them have probably left already, but anyway.

What do you worry about at 2 o'clock in the morning when it comes to your company's cybersecurity? Is it, do we actually have the right controls in place?

Is it, are our vendors quietly on fire? Or the truly terrifying one, why are we still trying to do all this with spreadsheets? Well, if that sounds like you, enter Vanta.

Vanta takes all that painful manual security busywork, chasing audit evidence, filling out questionnaires, updating the same spreadsheet for the thousandth time, and it automates it.

Their trust management platform continuously monitors your systems, pulls everything into one place, and helps keep your security program audit ready all of the time.

And yes, it uses AI, but in the useful way, flagging risks, streamlining evidence collection, and fitting neatly into the tools you already use.

So you can move faster, scale with confidence, and maybe even sleep through the night. Get started today at vanta.com/smashing. That's vanta.com/smashing.

And thanks to Vanta for supporting the show. And welcome back. And you join us for our favorite part of the show, the part of the show that we like to call Pick of the Week.

Pick of the Week is the part of the show where everyone chooses something, could be a funny story, a book that they read, a TV show, a movie, a record, a podcast, a website, or an app.

Whatever they wish, it doesn't have to be security related necessarily. Well, last week my Pick of the Week was the movie Polite Society, which I greatly enjoyed.

And watching that sent me down a rabbit hole to find other things that the writer and director of that movie had done. Which brought me to a place I'd never been before.

I give you We Are Lady Parts. Are you familiar with Lady Parts at all, Tricia? Or should I not ask such an indelicate question?

We Are Lady Parts is a sharp, funny, punk-powered TV sitcom from Channel 4 here in the UK about an all-female Muslim punk band trying to survive friendship and find their way in the world, all seen through the eyes of a geeky microbiology PhD student.

She's on the lookout for love, and she finds herself recruited to be the band's unlikely lead guitarist. And it's great. Amazing. It's really fun. It's properly funny.

Great characters, cracking dialogue. And it's got a super soundtrack. They have songs Bashir with a Good Beard and my personal favourite, Voldemort Under My Headscarf.

It's an awful lot of fun. It came out a few years ago. I think it may still be going. There's been at least two series of it so far.

It's well worth your time. Each episode's about, I don't know, 20 or 25 minutes. Really entertaining. Really enjoyed it. And it is We Are Lady Parts. And that is my pick of the week.

HarperCollins, I believe, is the publisher. And the whole book is written in Shakespeare-type dialogue.

And it introduces— I mean, it actually reads like the real Shakespearean play, but with Doctor Who dialogue and characters.

You can't have anything Doctor Who that doesn't have a Dalek involved.

And I just started reading it, so I'm only in the first part of it, but it is hysterical.

If you like theater or Shakespeare, or you just really enjoy a nice crossover, highly recommend. They have a Star Wars one too, which is good.

I really appreciate it. Where can people follow you online and find out what you're up to?

If you just search Google for Tricia Kicks SaaS, I think it's the first one that comes up. And thank you so much. It's such a joy to talk to you and be part of the show.

So thank you for having me again.

You can find me, Graham Cluley, on LinkedIn, or you can follow Smashing Security on BlueSky or Reddit or Mastodon.

And don't forget, to ensure you never miss another episode, follow Smashing Security in your favorite podcast apps such as Apple Podcasts, Spotify, and Pocket Casts.

For episode show notes, sponsorship info, guest lists, and the entire back catalog of 453 episodes, check out smashingsecurity.com. Until next time. Cheerio, bye-bye.

And you know who else we've got to thank? Yep, it's going to be our chums over on Smashing Security Plus, our Patreon platform.

Let me dig into the hat right now and pick out some names. Who've we got here?

Okay, we're going to thank this week: Ryan Hall, Tepotastic— sounds less like a person and more like a limited edition kitchen appliance— Adina Bogut-O'Brien, John W, 636B, still refusing to confirm whether they are a person or a software build; MJ Lee, who could easily be 3 consultants in a trench coat; Steve B, who presumably is good friends or archenemies with John W; Ask Leo, who arrives both with answers and an exclamation mark; and of course, our latest privacy-conscious recruit, Example Name, the patron saint of placeholder text everywhere.

Well, would you like the opportunity to have your name read out occasionally at the end of the show? All you gotta do is join Smashing Security Plus.

For as little as $5 a month, you'll become part of our merry band and get early access to episodes without all of the annoying ads.

Just head over to smashingsecurity.com/plus for more details of that. And you can support us in other ways, of course. You can like, you can subscribe, you can leave a 5-star review.

Or just tell your friends about the show. Go on, spread the word. Every little bit helps, and it really makes all the effort worthwhile.

Well, I will be back next week, and I hope you will too.

I expect you to have your lug holes pressed firmly against your smartphones as I tell you the latest crazy stories of cybersecurity with a fabulous special guest.

Until then, cheerio, bye-bye.

Host:

Graham Cluley:

Guest:

Tricia Howard:

Episode links:

- Notepad++ hijacked to serve malware in targeted attacks – Notepad++.

- Porn-quitting app caught leaking users’ sexual habits – 404 Media.

- MicroWorld Technologies’ eScan anti-virus update turned into a malware delivery system – Morphisec.

- Jmail.World.

- Informant told FBI that Jeffrey Epstein had a ‘personal hacker’ – Techcrunch.

- Confidential informant statement given to FBI – US Department of Justice.

- Post by Graham Cluley – LinkedIn.

- Trump’s acting cyber chief uploaded sensitive files into a public version of ChatGPT – Politico.

- We are Lady Parts – Channel 4.

- We are Lady Parts trailer – YouTube.

- “Bashir with a good beard” by We are Lady Parts – YouTube.

- “Voldermort under my headscarf” by We are Lady Parts – YouTube.

- Doctor Who: The Shakespeare Notebooks – Penguin.

- Smashing Security merchandise (t-shirts, mugs, stickers and stuff)

Sponsored by:

- Passwork – a reliable secrets manager and password management solution.

- Meter– Network infrastructure for the enterprise. Get a free personalised demo.

- Vanta – Expand the scope of your security program with market-leading compliance automation… while saving time and money. Smashing Security listeners get $1000 off!

Support the show:

You can help the podcast by telling your friends and colleagues about “Smashing Security”, and leaving us a review on Apple Podcasts or Podchaser.

Join Smashing Security PLUS for ad-free episodes and our early-release feed!

Follow us:

Follow the show on Bluesky, or join us on the Smashing Security subreddit, or visit our website for more episodes.

Thanks:

Theme tune: “Vinyl Memories” by Mikael Manvelyan.

Assorted sound effects: AudioBlocks.