Gizmodo‘s “security preparedness test” that targeted members of the Trump administration illustrates how everyone and anyone can fall for a phish.

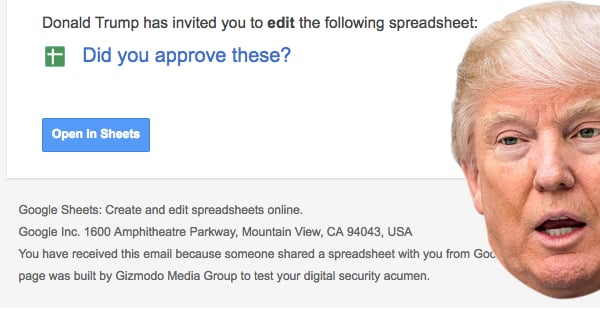

In April 2017, Gizmodo‘s reporters sent a “security preparedness test” to 15 members of U.S. President Donald Trump’s administration.

Rudolph Guiliani, Trump’s digital security advisor; Sean Spicer, White House press secretary; and others received an email not entirely unlike the messages sent out by the Google Docs worm in early May.

The message mimicked an invitation to view a spreadsheet in Google Docs. Each email originated from , but the Sender field displayed the name of a friend, colleague, or loved one to add to its legitimacy.

See that fine print at the bottom of the page?

“This page was built by Gizmodo Media Group to test your digital security acumen.”

Yeah, the email clearly gives itself away as a means of testing recipients “digital security acumen.” The Google logo even links to Gizmodo‘s website.

But it’s not hard to imagine that many people might not have noticed that.

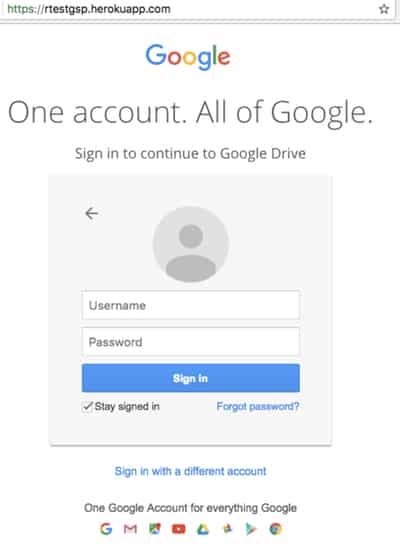

Those who clicked on the link found themselves presented with a fake Google login page that once again displayed the fine print and a linked image.

If someone then entered their credentials, Gizmodo didn’t store their password. But it would display an alert notifying them of the exercise and stating that a reporter would contact them shortly.

The test did induce a few clicks. As Gizmodo‘s Ashley Feinberg, Kashmir Hill, and Surya Mattu explain:

“Some of the Trump Administration people completely ignored our email, the right move. But it appears that more than half the recipients clicked the link: Eight different unique devices visited the site, one of them multiple times. There’s no way to tell for sure if the recipients themselves did all the clicking (as opposed to, say, an IT specialist they’d forwarded it to), but seven of the connections occurred within 10 minutes of the emails being sent.”

Fortunately, no-one went so far as to hand over their login credentials.

James Comey, the former director of the FBI, and Newt Gingrich, an informal advisor to the President, even responded to the email inquiring into the contents of the spreadsheet. For the sake of the test’s integrity, Gizmodo didn’t respond to those inquiries.

Public reaction of the exercise has been mixed. Some have pointed to the need for more security awareness training among Trump’s staffers. Others have argued (and argued against) the idea that the activity violated the U.S. Computer Fraud and Abuse Act (CFAA).

1/ What Gizmodo did phishing the Trump administration was not a violation of the CFAA.

— Robᵉʳᵗ Graham (@ErrataRob) May 10, 2017

In this case, the Graham Cluley Security News team agrees with the evaluation of CSO security journalist Steve Ragan.

Like Ragan, we’re hesitant to accept Gizmodo‘s use of red team exercises conducted by Facebook and the Department of Homeland Security as precedents for their test. That’s because these simulations required explicit permission – something which Gizmodo never received from the Trump administration. To be effective, these types of tests should also occur across several rounds and log who is entering their credentials. This simulation did neither of these things.

But Gizmodo‘s exercise did do something. As Ragan comments:

“In the end, what we have is a story about people who fell for weak Phishing attack, which is a problem organizations and security teams the world over deal with on a daily basis. It isn’t news, it’s reality. Phishing is arguably one of the largest problems a network or individual will face online, and there is no easy answer when it comes to dealing with it. No quick fixes. None.”

No doubt tests such as Gizmodo‘s have a place in the Trump administration and every other organization. If your company isn’t conducting its own simulations, it probably should. But to be truly effective in raising your workforce’s security awareness, your company needs to give its permission for an exercise that should be ongoing and encompass all your employees.

To hear more views on Gizmodo‘s probe of the Trump administration’s cybersecurity, be sure to listen to our recent “Smashing Security” podcast on the topic.

Show full transcript ▼

This transcript was generated automatically, probably contains mistakes, and has not been manually verified.

This transcript was generated automatically, probably contains mistakes, and has not been manually verified.

That's recordedfuture.com/intel. Sounds great.

Hello, hello, and welcome to Smashing Security, Episode 20 for the 11th of May, 2017. My name is Graham Cluley, and I'm joined as ever by my buddy, Carole Theriault. Hello, Carole.

How are you?

We saw it in France just before the French election, of course, and information being published online.

And most famously, perhaps, we saw the hack against the Democratic Party, Hillary Clinton's campaign last year in America.

And well, it looks some people in the media have got pretty fed up with hearing the old yada, yada, yada from the Trump administration about how much better they are at cybersecurity than the Democrats, and they wouldn't make those kind of mistakes.

And well, Gizmodo has taken matters into its own hands. And what they actually decided to do—

Well, they sent them something which looked awfully like a phish. The emails pretended to be a Google Docs invite to view a spreadsheet. You know those emails you get?

I mean, I say fairly convincingly because at the bottom of the page, there was a little bit of small print saying, "This page was built by Gizmodo to test your digital security acumen." And obviously, the journalists were waiting to see who would enter their credentials.

I find it weird because, for example, Sophos actually sells a tool called Phish Threat where you can do that kind of phishing test inside your own organization.

But the key thing to all of that is consent and intent and the fact that you're doing it in a way that gives you a chance to engage one-to-one with the people who made the mistake.

They're all inside the organization. It's your network. It's your email.

It's not going out to make fun of the public or something like that, even if they are key figures like politicians.

So it kind of seems to be skating on thin ice, really, doesn't it?

First of all, it wasn't real phishing, although it took people to a fake Google login page. Gizmodo say, "We weren't collecting anything which anyone was going to type in.

We obviously weren't going to use that information to log in to someone's account without their permission either." They say about half of the recipients clicked on the link.

So they sent it to around about 15 people. So I dunno, 7 or 8 people clicked on the link.

Now, those emails pretended to come from colleagues or friends or loved ones. So we saw, for instance, James Comey, who until recently was the FBI chief.

He wrote back believing that the email had come from someone he knew, an editor-in-chief on a lawfare blog. And he said, hey, I don't want to open this without care. What is it?

They didn't carry on the conversation, which of course a real phisher would've done. They would've replied and said, "Oh, hey honey, here's the shopping list."

I mean, what a nuisance it would be if every Thom, Dick, Harry, Boris decided, oh, we are going to send these phony phishing emails to politicians or to people in business.

What if we were all receiving 30 or 40 of these a day just because a journalist wanted to write a juicy story about what happened and who might have been silly enough to click on the link?

I agree with Duck that if you're going to do something like this, it has to be done with the consent of the organization itself. They should be testing themselves.

There shouldn't be people trying to outwit people in this fashion.

Now I can make hay while the sun shines and then try and convince the world that, oh, well, I didn't have any evil intention.

You get permission first, you get your get out of jail free card.

You get the consent and, you know, it's all done in a way that if you do break in and something goes wrong and a server crashes, somebody on the inside actually knows that trouble might be ahead and all of that sort of stuff.

In other words, in the same way that I guess if you're a first responder, like someone in the fire brigade or ambulance service, you know, you don't do an exercise by actually running someone down in the street and then going and trying to save them.

And there's always an element that yes, there is something synthetic about it.

It's also, if you're going to do a phishing test properly, then, and you want to actually see who gave away data and what did they give away, then there are all sorts of security things that you have to get absolutely right because you are collecting data that if it's inside the organization, maybe you're entitled to see.

There could be an exploit kit or there could be something bad about the very site you're going to.

But if all you know is that the person clicked the link, then that means you can go— if you're inside the company, you can go and say to them, look, let me give you some counseling, let me tell you why that's a bad idea.

But it doesn't mean you actually phished them. If what they get is a page and they then see this is obviously bogus, the HTTPS certificate's wrong or whatever, and they bail out.

And it seems Gizmodo can't measure that in what they're doing.

And they were curious because there's been so much hacking going on and they, in a safe environment, clicked on the link to see what was at the other end.

It may have been part of their investigation.

You know, how many of them put in username?

You know, so it seems that the problem with this is interpreting the results as something that you can only do really internally when you do a test like this.

So yeah, I get the idea. They're public figures, right? So let's see whether they're at risk of clicking on stuff, but it doesn't really prove anything.

And therefore it kind of makes a story where the story is we did an experiment and the results are inconclusive. So why did we bother? Seems to be what it adds up to, to me anyway.

There's a well-known free open source video converter tool called HandBrake, named after the part that you get in a car that stops it rolling down hills when you park.

And it was actually written by the same guy who wrote a BitTorrent client, which is called Transmission, which also of course gets its name from a part in a car.

So thus imagining the connection.

And now last year, Transmission, their actual software distribution site, got hacked not once but twice, where crooks uploaded— so they didn't actually hack the source code or anything, they just uploaded a remix of the official distro that included malware.

Now it happened again with HandBrake and the Windows people, you're not allowed to laugh or gloat. Well, you can, but you know, don't do it on a webcam.

You can only do it unofficially. They chose to target Mac users. So Windows users who downloaded this bogus HandBrake version, well, there wasn't one. The Windows one was fine.

They just went after Mac users. And what they put in there was this reasonably dangerous Mac spyware called Proton.

And it's designed to try and grab your keychain data, grab all your browsing history for all sorts of different browsers. So it knows about Firefox, Opera, Safari, Chrome.

Apparently what HandBrake has, they've got their main server and that's where they store the source code and all the development.

And they have the main downloads and they have a mirror site for load balancing and online and whatnot, and the mirror site got hacked.

So the good news, if you can call it that, is during this 4 or 5 day window last week, if you did the Mac download, there's basically a 50/50 chance you get the bad one depending on whether you went to the primary or the secondary site.

But the problem is you're going to the real site. It's the genuine site.

If you go and look at the source code, it's all untouched because the crooks have downloaded the source code and just remixed it, added their secret sauce, uploaded it.

You download the DMG file. You run the app as normal and very quietly in the background, it just sets itself up with this whole spyware thing.

It can also take screenshots and set up a little SSH connection that lets the crook send commands.

You're less likely maybe to be paranoid about your downloads.

Something like HandBrake, for instance, it recommends that you check the checksum of the file after downloading it to make sure that it hasn't been tampered with, although I'm sure hardly anybody bothers with that.

But maybe you're even less likely to bother with that if you're a Mac user. And maybe they thought, maybe they thought they could spread it for longer.

But I'm with you, maybe they figured if they do the Windows one, it'll probably get noticed sooner, or might get noticed sooner.

And then if they're actually after Mac users, I guess also if you've got the malware and it knows all about things like Keychain and you haven't done that before, maybe they figure it's a kind of ripe market for exploitation, as it were.

But there are still some who go, no, no, no, no, you'll be fine on the Mac. You'll be fine on the Mac.

The irony is, do you remember, you know, a few years ago everyone said, oh, you can never get malware on a Mac because it'll always pop up and ask you your password so you'll know, as though no other app ever does that.

And the irony is in this case, they actually want your root password or your admin password because they need it to go and get all your keychain stuff.

So they actually pop up a fake dialogue good old-fashioned, oh, you need additional codecs, and you put your password in there.

And you know, for all the Mac users who go, well, that should be suspicious, yes, it should be, but irony of ironies, the other two, you know, video-related apps that do this are Java and Flash.

Those installers both, when you run them, they both require your password because they're installing for the whole system, not just for you.

So you have to sort of forgive somebody for figuring it kind of looks okay, particularly when they know they actually go to the genuine site.

But, you know, you don't have that sort of walled garden, you know, extra security. I mean, this is really another advert for the App Store, isn't it? Getting your Mac apps there.

What I don't like is this increasing pressure or absolute requirement if you're an iOS, that that's the way, the truth, and the life, and from it thou shalt not veer.

And that's the only thing you could do, particularly when you think that with Apple's App Store, you ironically, malware does get in there from admittedly only from time to time.

So they're bad at doing a perfect job of keeping malware out, but the one thing they won't let in by design is a proper antivirus program because it requires to do too much.

That's the right way to do it.

And of course you want an antivirus and you want things which do stuff which maybe Apple doesn't approve of for the App Store.

Where an update is pushed out and actually causes a problem.

So I don't know, there's a lot of app developers out there, there's a lot of people providing apps and they're not all necessarily built the same, right?

Some are much more secure than others and some apps are better maintained.

That probably is how most people are updating them.

And so maybe the people who got infected by this particular incident are more likely to be new people trying out the software maybe for the first time.

You think, okay, should I just press the update button in the software or should I just remove it, go and get the very latest version, throw away all my old configuration stuff and kind of make a fresh start?

You know, like when you reinstall Windows and you think, I want to get out of all the, get rid of all those DLLs that got added that I've forgotten about from 1978 and whatnot.

So, you know, people might have just downloaded it anyway, or they could be showing it to a buddy.

Although this, I think was a new variant, wasn't it, of this particular malware. Which maybe hadn't been seen before. Yeah.

You're just copying it to your application folder. You don't need a password for that.

A self-contained app that only is a bundle that goes in your applications directory, it shouldn't need your password to install. Right.

And therefore when you see that pop-up, you should be suspicious.

For example, when you run your browser, every time you try and download a file, it doesn't say, now put in your password before I'll do the download.

And then if you're worried, maybe go and get some objective independent check. The other thing I know, I know, I know I say this all the time. I know you guys do as well.

Whenever you can, two-factor, two-step verification. That's a great thing because then if the crooks get the passwords out of your keychain, they're not any use on their own.

So it's a good reminder that maybe that secondary one-time password check that you can add to your email and your most of your accounts these days, turn it on even if you think it's inconvenient.

It can help you a lot.

All in the name of improving national security.

So there's this great piece in Ars Technica, which was talking about the DOD cyber strategy, which is to create a cyber mission force.

Now, this is a force that's made up of 133 cyber mission teams.

There is a lack of skilled experts within the armed forces. Now, so that means that the armed forces now have to look civvy side to get their talent, right?

And you think, okay, that's not a big deal, but actually it's a little bit more complicated than you think initially.

So number one, the armed forces tend to focus on a 5 to 10 year development cycle, right? So basically you come in at the bottom and you climb up the ranks.

And pay is not necessarily on par with private sector.

And when I read this, I was like, well, what exactly is boot camp, right? So it's 6 to 13 weeks of extremely intense military training.

Because if you think you're into— you don't mind the physical side and you don't mind the polishing and the whole esprit de corps thing, then you can get a degree.

You can end up without the burden of a student loan. And you get the kind of experience that—

So what they're suggesting is that perhaps these new cyber warriors can skip boot camp and they can be provided with official ranks and pay grades that match their current skill set, right?

Rather than having to come in at the armed forces at the ground level.

So for example, the Marine Corps force is discussing the option of letting people with desired skill set to join as uniformed Marines, but they don't necessarily possess all the skills that we all come to identify with being a Marine.

You know, the brave, the physical, the mentally strong, ready for battle, et cetera. So, on one side, I kind of get it. It makes it more attractive to hire people.

But on the other hand, we kind of think that everyone who wears uniforms kind of have gone through that. And are they going to get the respect from their fellow officers?

Doesn't it kind of just muddy the whole concept of—

In other words, instead of training someone up in the Marines or the Navy or the Air Force or whatever, and then them going, "Hey, I've done my 10 years, I've done my 15.

Should I sign up for longer or should I jump ship/plane and go into the private sector and maybe get more money?" You want people to go the other way.

Why can't they be civilian contractors?

They may want to make sure that they're properly indoctrinated.

I mean, that insider threat is always going to be a problem, isn't it?

And, you know, so you could argue, well, in the Chelsea Manning case, how come no alarms went off when so much data was being copied?

In the Edward Snowden case, you know, how come he had all that power when he had a comparatively short service time, as far as I knew, etc.?

So those are all things, those are all issues that face any organization.

And whether you're an insider or an outsider or a Midway contractor, I think that those are problems that you have to solve anyway.

I guess what seems surprising to me in all of this is this 133 units.

It seems like getting all the moving parts to talk to one another or interconnected is going to be really complicated.

It's all DOD and security and there's gonna be a ton of activity going there.

And I mean, think about it for small, you know, there's going to be companies, there's also the private sector who are looking for experts as well, right?

So, there's an absolute shortage. If anyone wants, you know, if anyone's young and they need job security, cybersecurity is the way to go.

I don't think we're going to have any shortage of roles.

There's so much information out there in so many places about what's going on in the world of cybersecurity.

You need some experts to sift through it and find out what's important, what is trending, and deliver that information to you in a timely fashion.

Well, if you're interested in that, the latest information on the hackers, the exploits, the vulnerabilities, if you want that information delivered to you in a meaningful way every day, then sign up for the free Recorded Future Cyber Daily Newsletter.

All you have to do is go to recordedfuture.com/intel. That's recordedfuture.com/intel. And thanks very much to Recorded Future for sponsoring the show.

Okay, I'll make sure I use that laugh when I'm imitating you as well. Didn't know you did that.

Apparently there's little or no public interest defence in the US to protect journalists bending or breaking the law in carefully defined and documented ways in pursuit of a legitimate story. It's been used in the UK to try to defend pretty indefensible tactics like phone hacking and bribing public officials (prison officers, soldiers) for stories and/or pictures that the "normal" reptiles of Fleet St can't get, eg some D division celeb banged up in their cell. HOWEVER, it's also been used to protect legitimate journalistic endeavour. The classic example would be the various demonstrations of smuggling guns and knives airside or even onto aircraft to show up crap airport security. There's clearly a legimate public interest in publicising the fact that, eg, airport security doesn't work very well. And if security's improved as a consequence, that's a bonus.

The exercise results are decidedly mixed. From the article: "Fortunately, no-one went so far as to hand over their login credentials." So none of the targets actually fell for the phish, contrary to the headline and lead paragraph. That is good news. It is, on the other hand, bad news if anyone followed the link from other than an isolated secure environment, an act could have allowed introduction of a compromise. Unfortunately that seems likely in view of the reported short delay between sending of the message and activation of the link. Officials (and those who screen their email) need to do a better job, as hard as that may be given the likely volume of their email correspondence.